Why Most AI Marketing Advice Is Setting You Up to Fail (And What Smart Businesses Do Instead)

Why Most AI Marketing Advice Is Setting You Up to Fail (And What Smart Businesses Do Instead)

Strategic Analysis by: Insight2Strategy

Published: March 9, 2026

Executive Reading Time: 12 minutes

Executive Strategic Insights

- 95% of AI pilots fail to scale because companies treat AI as a technology purchase instead of an organizational capability decision

- AI scaling is 70% people and process, 30% technology — most advice inverts this ratio because the 30% is what's easy to sell

- Automation without strategy magnifies inefficiency — high-performing teams automate decisions, not just execution

- AI replaces tasks, not accountability — the 5% seeing real impact augment their best people rather than replacing them

- Strategic question: "What decision-making capability would most impact our growth, and would AI meaningfully improve that capability?"

- Framework detailed below shows how the top 5% start narrow and deep, build full-stack capabilities, and measure against revenue metrics

Introduction

If you feel like you're drowning in AI marketing advice—newsletters promising 10x growth, LinkedIn threads from "experts" who've never run a department, tools claiming to automate your way to revenue—you're not alone. We're in what I call the "Great AI Disillusionment" phase: the gap between what these tools promise and what they actually deliver has never been wider.

Here's the pattern I keep seeing: Smart executives who successfully built $5M-$20M businesses are now making uncharacteristically bad decisions about AI. Not because they've lost their instincts, but because they're following advice designed to sell technology—not drive business outcomes.

The data tells the real story. MIT research shows 95% of AI pilots fail to scale [MIT, 2025]. McKinsey found that only 11% of companies have adopted AI at scale, and just 5% see meaningful EBIT impact [McKinsey, 2024]. These aren't technology failures—they're strategy failures wrapped in a technology disguise.

This article breaks down four of the most damaging AI marketing myths, why they persist, and what the 5% who are actually winning do differently.

Myth #1: "AI Tools = AI Strategy"

The Myth

If you buy the right AI tools—the content generators, chatbots, predictive analytics platforms—the strategy will take care of itself. Good technology drives good results.

Why It's Wrong

This frames AI as a software purchase when it's actually an organizational capability decision. Tools don't create advantage—decisions do. When you treat AI as a technology decision, you optimize for features and pricing. When you treat it as a capability decision, you optimize for business outcomes.

McKinsey research proves this: AI scaling is 70% people and process, and only 30% technology [McKinsey, 2024]. Most AI advice inverts this ratio because the 30% is what's easy to sell. When companies lead with tools instead of strategy, they automate chaos. Bad messaging gets generated faster. Broken workflows get scaled. Silos become more efficient silos.

⚡ Quick Implementation Tip

Before evaluating any AI tool, define three non-negotiables: (1) What business outcome you're improving (revenue per customer, CAC, close rate), (2) What decision-making process must change to capture that improvement, and (3) What organizational readiness gaps exist. Only then should you evaluate technology options.

The Reality

AI should amplify a clear marketing strategy—not replace one. Smart businesses define three things before they ever evaluate a tool:

- What business outcome they're trying to improve (revenue per customer, cost per acquisition, time to close)

- What decision-making process needs to change to capture that improvement

- What organizational changes must happen before technology can help

Only then do they select tools—and by then, the right choice is usually obvious.

Example

Two B2B companies invested in the same AI-powered content platform. Company A deployed it immediately to "fix" lead quality issues and triple their output. Three months later, their content volume had tripled, but lead quality dropped because their ICP and value proposition were still unclear. AI just produced more irrelevant content faster.

Company B spent six weeks before deploying. They clarified their positioning, mapped customer journey friction points, and redesigned their content strategy around those insights. Then they used AI to accelerate production of the right content. Lead quality improved within one quarter, and deal velocity increased 15%.

Same tool. Different business outcomes. The difference wasn't the technology.

Myth #2: "More Automation Means Better Marketing"

The Myth

If you automate enough of your marketing—emails, ads, content distribution, social scheduling—performance will automatically improve. Automation equals efficiency, and efficiency equals results.

Why It's Wrong

This myth confuses activity with outcomes. Automation without intent leads to noise, not impact. Many teams automate emails, ads, and content without asking whether those assets should exist in the first place.

This is why only 5% of companies report meaningful EBIT impact from AI despite widespread experimentation [McKinsey, 2024]. Automation magnifies whatever you feed it—effectiveness or inefficiency. Technology is never a strategy; it's an accelerant. If your current marketing strategy is mediocre, AI will only help you be mediocre faster.

The Reality

High-performing teams automate decisions, not just execution. They use AI to:

- Prioritize audiences based on buying signals and behavioral patterns

- Identify which messages resonate before scaling campaigns

- Optimize budget allocation in real-time based on changing conversion patterns

- Test strategic positioning variations across segments simultaneously

These aren't efficiency improvements—they're effectiveness improvements. Execution becomes faster because the thinking is better.

Example

An e-commerce brand automated weekly promotions across every channel to "save time and increase reach." Engagement dropped 20% because they were creating promotional fatigue.

After stepping back, they used AI to analyze purchase behavior and timing patterns. They reduced campaigns by 40%, focused on the segments and moments most likely to convert, and personalized messaging based on customer lifecycle stage. Revenue per campaign increased 28%, and overall email revenue grew 45%.

They sent fewer emails but made smarter decisions about which emails to send. That's the difference between automation and strategy.

Myth #3: "AI Will Replace Marketing Expertise"

The Myth

AI can think like a senior marketer—generate strategies, create campaigns, optimize performance—so you don't need expensive marketing talent anymore. The bot does it now.

Why It's Wrong

This is the most dangerous myth. AI doesn't understand context, nuance, brand positioning, or strategic trade-offs the way humans do. It predicts based on patterns, not business priorities. When everyone can generate 1,000 blog posts a minute, the value of those posts drops to zero.

MIT research confirms that while AI excels at optimization tasks, it struggles with cross-functional judgment and strategic trade-offs—the exact areas where marketing leadership matters most [MIT, 2025]. The 5% seeing real impact aren't replacing people with AI—they're augmenting their best people.

⚡ Quick Implementation Tip

Reframe AI as a "strategic editor" rather than a replacement. Use it to generate 10x options, then apply human judgment to select, refine, and align with brand strategy. Companies hiring "AI editors" and strategists are outperforming those cutting headcount to "save costs."

The Reality

AI replaces tasks, not accountability. It's a co-pilot, not an autopilot.

The strategic value isn't in the output (the words, images, or campaigns). It's in the input: the strategy, brand voice, unique customer insights, and business judgment that guides what AI produces.

Smart organizations use AI to:

- Free senior marketers from low-value tactical work

- Improve decision quality with better data inputs

- Enable faster experimentation with proper guardrails

- Scale what works without scaling what doesn't

Human expertise sets direction. AI accelerates execution. Companies that understand this distinction are hiring editors and strategists who know how to direct AI, rather than cutting headcount to "save costs."

Example

A SaaS company used AI to generate campaign ideas and messaging variations. But they left prioritization, brand alignment, and strategic positioning to their marketing leadership. The result? They tested 3x more concepts in the same timeframe without diluting brand positioning or confusing the market. Close rates improved because they were running better experiments, not just more experiments.

Meanwhile, a competitor tried to replace their marketing team with AI-heavy workflows. Within six months, organic traffic plummeted as Google's algorithms penalized their "hollow" AI content that lacked original research and expertise (E-E-A-T). They're now rebuilding the team they eliminated.

Myth #4: "You Need AI to Compete"

The Myth

Every competitor is adopting AI. If you don't move fast, you'll be left behind. The risk of waiting exceeds the risk of moving. You need AI or you'll lose.

Why It's Wrong

This creates urgency without direction—and it's how companies end up spending six figures on "AI transformation" that transforms nothing. The data shows most companies aren't winning with AI—they're trying to win. Only 11% have adopted AI at scale [McKinsey, 2024]. The other 89% are in pilot hell, burning budget on experiments that don't connect to business outcomes.

Your competitive risk isn't that you're moving too slowly on AI. It's that you're moving quickly on the wrong things.

The Reality

You don't need AI to compete. You need better decision-making to compete. Sometimes AI enables that. Often it doesn't.

The strategic question isn't "How do we implement AI?" It's "What decision-making capability would most impact our growth, and would AI meaningfully improve that capability?"

For some businesses, the answer is yes—AI can unlock a meaningful advantage in personalization, segmentation, or predictive analytics. For others, the constraint is strategy, positioning, or execution, and AI would just automate existing problems faster.

The companies winning with AI are the ones who can tell the difference. They start narrow and deep, not broad and shallow. They pick one high-impact problem, build the full capability stack for that problem (data, process, people, technology), measure against revenue metrics, and then expand.

Example

Two marketing leaders attended the same AI conference and returned energized to "transform with AI."

Leader A launched AI initiatives across content, advertising, social media, and analytics. Two years later, they have 12 AI tools deployed, no measured ROI, and a team skeptical of the next "transformation" initiative.

Leader B asked: "Where is our judgment most constrained by data volume or speed?" The answer was customer segmentation—they had rich behavioral data but couldn't analyze it fast enough to personalize at scale. They built one AI capability around that problem. It changed how they go to market. Customer acquisition costs dropped 18% and revenue per customer improved 23%.

Leader A is still looking for ROI. Leader B is expanding to the next capability. Same starting point. Different strategic discipline.

What Smart Businesses Do Instead

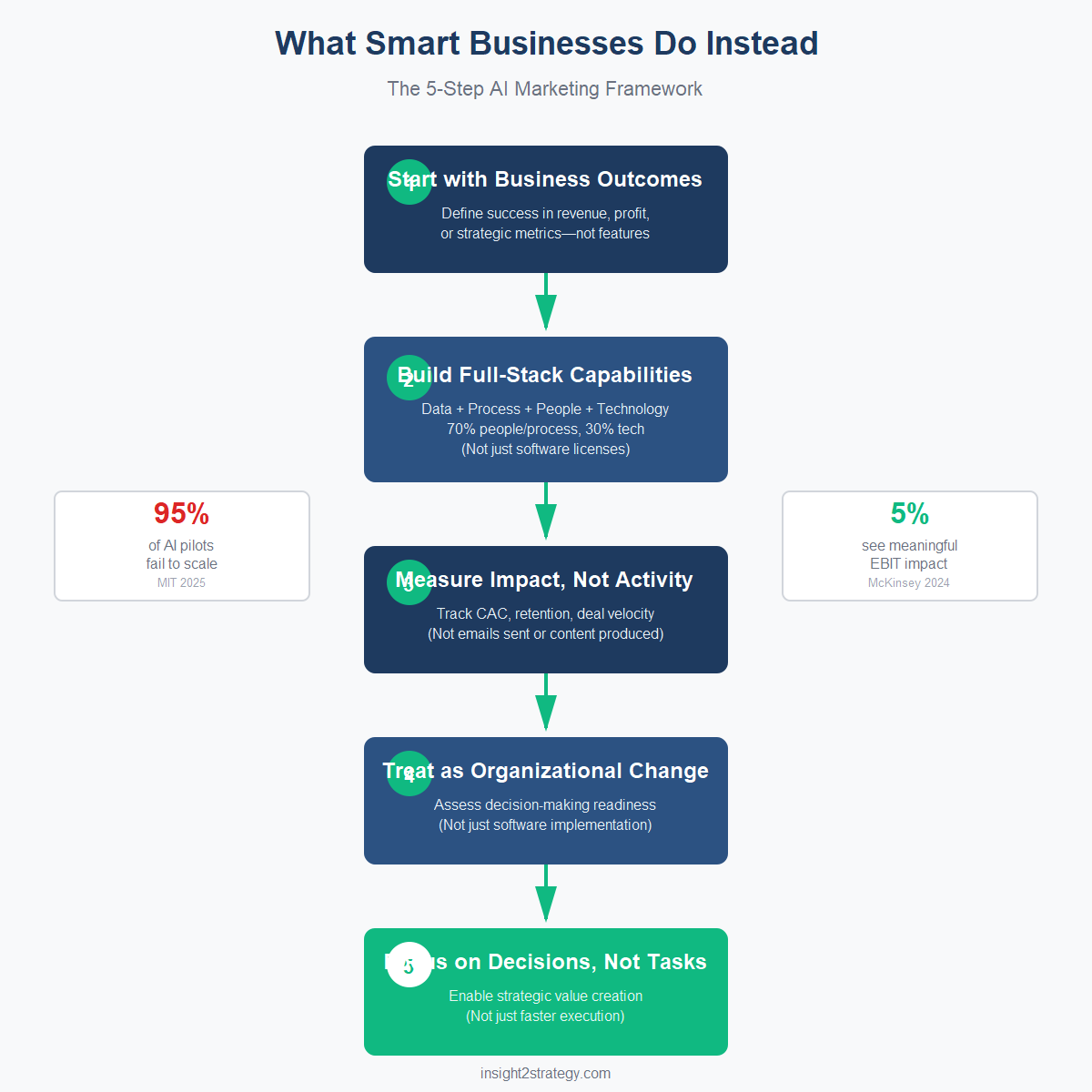

The 5% seeing real returns from AI aren't following the advice you're reading. They're following a different playbook:

1. Start with Business Outcomes, Not Technology Capabilities

Define success in revenue, profit, or strategic metrics—not features or activity. Work backward to determine if AI can help you get there. If it can't meaningfully improve a decision that matters, don't force it.

2. Build Full-Stack Capabilities, Not Point Solutions

AI without clean data fails. AI without process changes creates chaos. AI without trained people gets abandoned. The 70/30 rule matters: 70% people and process, 30% technology. Build the complete capability stack or don't start.

3. Measure Impact, Not Activity

Track business metrics: revenue per customer, customer acquisition cost, deal velocity, retention rates. Activity metrics—emails sent, content produced, hours saved—are vanity metrics that mask failure.

4. Treat AI as Organizational Change, Not Software Implementation

The question isn't "Can our marketing team use this tool?" It's "Is our organization ready to change how it makes decisions based on what AI reveals?" Most organizations aren't. That's why most AI projects fail.

5. Focus on Decisions, Not Tasks

The strategic value of AI isn't doing what you do now but faster. It's enabling decisions you couldn't make before—or making them at a speed and scale that changes what's possible.

📊 Implementation Framework

This five-step approach represents how the top 5% systematically build AI capabilities that drive revenue impact. The key difference? They treat AI as an organizational capability decision, not a technology purchase.

Need help adapting this framework to your specific business situation? Let's discuss your implementation approach and identify your highest-impact starting point.

Getting Started

If you're frustrated with AI advice that sounds impressive but goes nowhere, ignore the tools for now. Ask one question: What's the highest-impact marketing decision we make repeatedly, where better data or faster analysis would meaningfully improve outcomes?

Answer that question. Build the capability to improve that decision. Measure the business impact. Then expand.

The potential is real—PwC estimates AI could contribute $15.7 trillion to the global economy by 2030 [PwC Global AI Study]. But capturing that value requires rejecting most of the advice circulating right now and getting strategic about what you're actually trying to achieve.

|

This post is part of The B2B Marketing Reality Check The strategic framework for growth-stage B2B tech companies — now available in paperback and Kindle. Every topic we cover in this blog goes deeper in the book, with frameworks, diagnostics, and quick wins you can put to work immediately. Get the Free PDF →Want to work through the framework hands-on? Get the companion workbook → |

Frequently Asked Questions

How long does building an AI marketing capability typically take?

For a focused, narrow-and-deep approach (recommended), expect 8-12 weeks to build your first capability: 2-3 weeks for strategic planning and capability assessment, 4-6 weeks for data preparation and process design, and 2-3 weeks for pilot deployment and measurement. Companies that try to transform everything at once typically spend 18-24 months without measurable ROI.

What budget should we allocate for AI marketing initiatives?

The 5% seeing results don't start with budget—they start with business outcomes. Allocate based on the ROI potential of the decision you're improving. For example, if better customer segmentation could improve revenue per customer by 15-20%, calculate that impact and work backward to determine the investment ceiling. Most successful implementations invest 70% in people and process (training, workflow redesign, change management) and 30% in technology.

When should we hire outside AI expertise versus handling it internally?

Hire outside expertise when: (1) you're defining your AI strategy and capability roadmap for the first time, (2) you lack internal experience with data architecture and ML model deployment, or (3) you need to accelerate learning and avoid expensive mistakes. Keep it internal when: you have clear strategic direction, you're expanding proven capabilities to new areas, or you're optimizing existing AI workflows. The best approach? External strategic guidance upfront, internal team development for execution.

How do we measure ROI on AI marketing investments?

Track business metrics, not activity metrics. Start with a baseline measurement of the decision you're improving (e.g., current customer acquisition cost, revenue per customer, deal close rate). Deploy your AI capability and measure the same metrics after one quarter. The 5% seeing results track: revenue impact (increased revenue per customer, improved win rates), cost impact (reduced CAC, improved marketing efficiency), and speed impact (faster decision-making, reduced time to value). Avoid vanity metrics like "emails sent" or "content produced."

Conclusion

AI isn't failing businesses—bad advice is.

The gap between AI's promise and its performance in most businesses isn't a technology gap. It's a strategy gap. The advice flooding your inbox is designed to sell technology, not transform your business. It optimizes for urgency, not outcomes. It promises efficiency, not competitive advantage.

That's why 95% of AI pilots fail to scale [MIT, 2025].

When organizations treat AI as a shortcut, they get short-term activity and long-term disappointment. When they treat it as a strategic capability—grounded in people, process, and clear business outcomes—they see real returns.

The companies in the 5% aren't smarter about AI technology. They're clearer about business strategy. They know what decisions matter. They know what would improve those decisions. And they use AI only when it makes those improvements possible.

If you're ready to cut through the noise and build an AI capability that drives measurable business outcomes—not just activity—that's exactly what we help growing businesses do.

Ready to Build an AI Marketing Capability That Actually Drives Revenue?

Every business situation is unique. Let's discuss how the 5% framework applies to your specific challenges and identify your highest-impact starting point.

No sales pitch. Just strategic insights on how to approach AI as a capability decision—not a technology purchase.

About Insight2Strategy

We help growing businesses ($5M-$20M revenue) build marketing strategies that drive measurable outcomes. Our approach focuses on strategic frameworks, not tactical templates—teaching you how to make better decisions, not just execute faster tasks.

Comments

Post a Comment